How to Conduct a Google Search Console Audit

Contents

There are many reasons why you would want to conduct an audit on your site. Maybe it’s not performing as you’d expect, or you’re having some sort of technical issue that you can’t explain.

A Google Search Console audit will help you cover every minute detail, from technical issues, to indexing, to content-related problems.

Best of all, you can conduct the entire audit using Google Search Console. Follow through each step, and you’ll have no problem identifying any issues that may be bogging down your site.

Getting Started

Let’s start with the basic stuff. If you haven’t used Google Search Console much, these first three steps will help introduce you to it so you can learn where to go and what to look for on the dashboard.

1. Familiarize Yourself With The Dashboard

Once you’re on the dashboard, take a look at the site’s performance. If you notice any dramatic drops in traffic, you’ll want to dig a little deeper.

You’ll find performance, indexing, experience, and enhancements on the main page. At the top, there’s a blank field where you can input a specific URL to inspect it and learn more about that page.

If you’ve never used Google Search Console before, you may have to verify your site’s ownership before gaining access to the data. There are several methods available for verification (HTML file upload, HTML tag, domain name provider, etc.). Choose the method that suits you best and follow the instructions to complete the verification process.

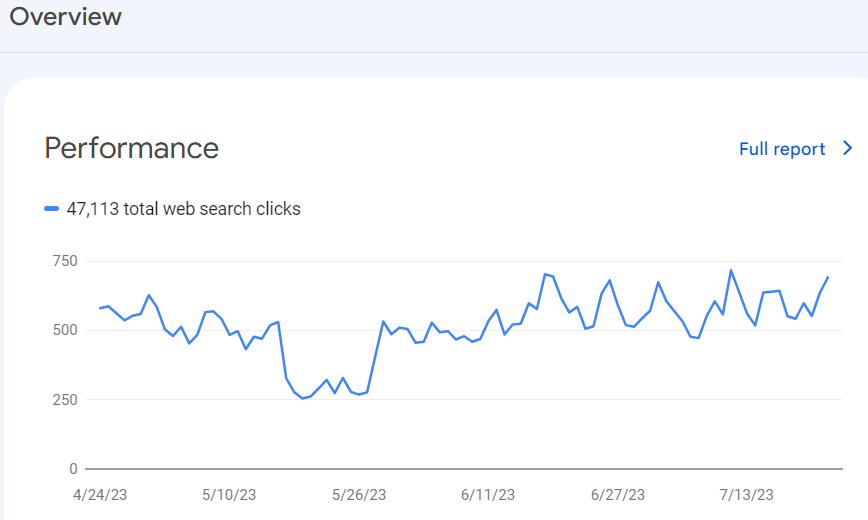

2. Check Traffic to Identify Recent Changes

If we take a look at the site above, we can see there was a pretty steep dip in traffic for a couple of weeks, but it’s rebounded since.

There’s always a reason for dramatic changes in traffic, which usually means that Google is updating the SERPs somehow.

When you’re currently in the middle of a reduction in traffic, you’ll want to look at your top-performing pages and keywords to see if you’ve lost any.

For example, you may have been ranking for the keyword “seo audit” a month ago but if you check today, you might have dropped a few positions in the search results which is causing you to have less traffic.

Regardless of the cause, this is an important step in the Google Search Console SEO audit because you need to not only access this information but understand how to interpret it.

Another cause of a drop in traffic is a technical issue which we’ll get to later on.

3. Look for Security Issues

Security issues are an immediate red flag and something you’ll want to fix right away.

If you scroll down, you’ll find the security and manual actions section. These are penalties applied to your website based on Google’s human reviewers. If Google determines that you’ve violated the terms of use, the site may be flagged and reviewed by an actual person.

Manual actions are different from algorithmic penalties, where a website’s ranking drops due to automatic algorithm updates. Manual actions, on the other hand, are applied manually by Google’s team after a thorough review.

The purpose of manual actions is to maintain the quality and relevance of search results by ensuring that websites follow Google’s guidelines and do not engage in practices that manipulate search rankings.

Some of the things that get you flagged are:

- Black hat linking

- Thin content that provides no value

- Spam

- Link cloaking

- Hacking or malware

If you click and open these sections and find anything other than “no issues detected,” you want to make this your number one priority because it’s likely resulting in a major dip in traffic and little to no rankings for your site.

Sitemaps and Crawling

Next, we’ll look at the structure of your website and make sure all relevant pages are accessible by Google. If Google can’t get to a page because it’s not indexed or it’s set up in a way that prevents it from being indexed, then you won’t be able to rank for those keywords.

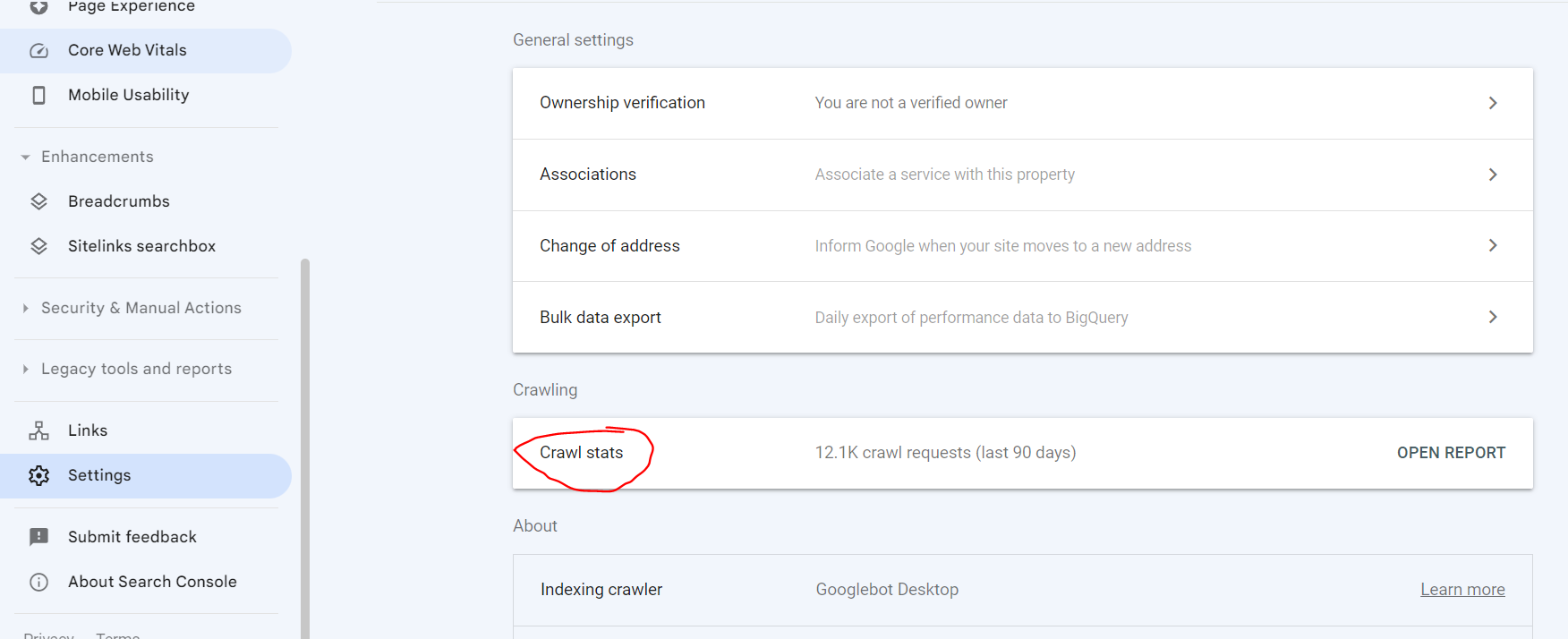

1. Make Sure Google is Crawling All Pages

Go to settings > crawl stats. Once you open that report, it’ll tell you how often Google is crawling your site. If you’re regularly adding new content to the site, then the amount of crawling Google should be increasing over time.

You’ll also want to look at this section because it tells you what pages are not being crawled and for what reason.

For example, a 404 error is a page that isn’t loading or can’t be found. In the case of this website, it’s only nine pages, and they are category pages that we don’t want Google to crawl because we don’t care if they rank.

The bottom line is you want Google to crawl all relevant sites and ignore the ones you don’t need them to crawl. This section of GSC will give you this information.

2. Check robots.txt

If there is a page you don’t want Google to crawl, you can identify and remove that page using robots.txt.

The robots.txt file is a simple text file placed at the root directory of your website and serves as a set of instructions to web crawlers about which parts of your site they are allowed or disallowed to access.

To do this, you must access your hosting account and navigate to the root directory. In the robots.txt file, you can add a “disallow” code with the URL you want to disallow after that.

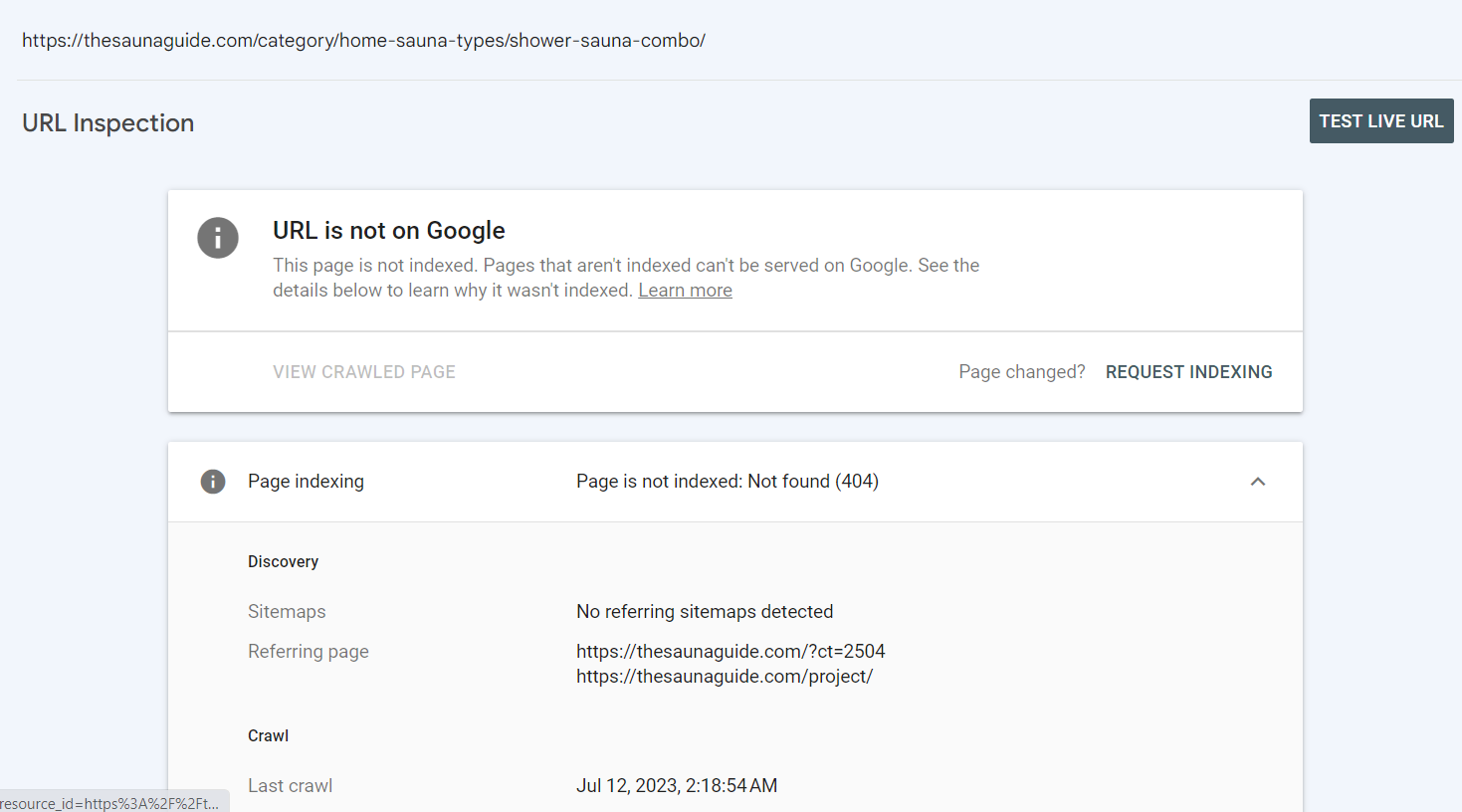

Now if you want to verify that a page is not being crawled, you’ll use the search function in GSC.

Once you enter the URL, you’ll want to see a result like this.

This means that Google cannot find the page, so as a result, it’s not being indexed, and your crawling resources are not being wasted on this page.

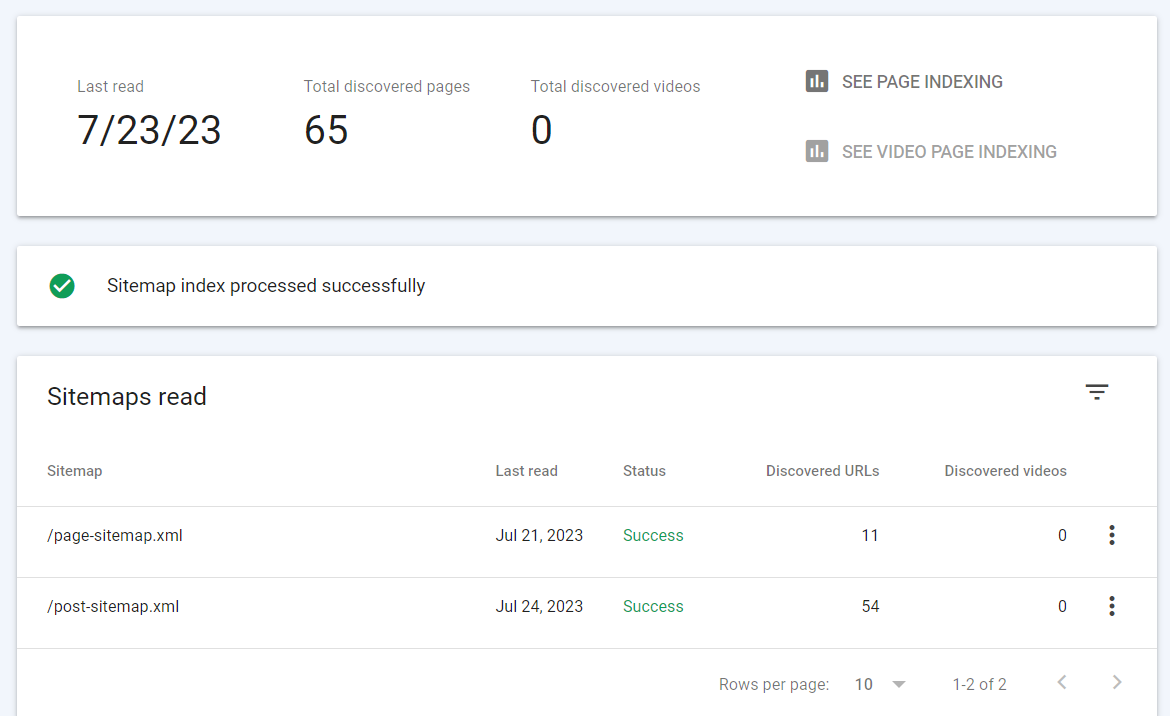

3. See if Sitemaps Have Been Submitted

A sitemap is an essential component of a website, and its importance lies in helping search engines understand the structure and organization of your site’s content. It serves as a roadmap for search engine crawlers, guiding them to discover and index all the important pages on your website.

You can use the sitemaps section of Google Search Console to identify your submitted sitemaps. If you’ve never submitted a sitemap, this is something you’ll want to do right away. There are a number of tools to help you do it.

4. Check XML sitemap

Chances are, the sitemap you find in GSC is an XML sitemap. You can tell by looking at the end of the sitemap to see if there is a “.xml” at the end.

Go ahead and open that up. You’ll see a page similar to this.

There are a couple of things you want to look at here. First, make sure the last read date is recent. If it’s taking Google longer than a week to crawl your website, it’s likely due to manual action, poor content, or your site is just really new.

Look below that and make sure it says “sitemap index processed successfully.” This means that the sitemap is crawlable, and all pages that should be discovered are discoverable. If you have an error message here, GSC will provide you with the steps needed to fix the issue.

5. Review Internal Linking Policy

Finding the best internal links is a great way to improve site structure and make your site easier to crawl. Think of an internal link like one section of a web that connects it to the other sections. When you do this effectively using relevant pieces of content and high-quality anchor text, it helps both Google and users navigate your site more easily.

Remember, all of these steps you’re taking in the audit are to make your site easier to use. The easier the site is to navigate, the more keyword opportunities Google will find.

Use Link Whisper to optimize your internal linking strategy. This tool suggests internal links for you based on relevancy and anchor text. It takes the thought out of the process so you don’t have to worry about figuring it out yourself or making a mistake.

Page Experience and Core Web Vitals

The on-page experience of your website is a big part of its overall performance. If the site loads slowly, doesn’t load properly, or has a lot of technical issues; you can’t expect to rank very well.

Luckily, a Google Search Console audit is just about all you need to determine if there are any serious issues with your site’s performance.

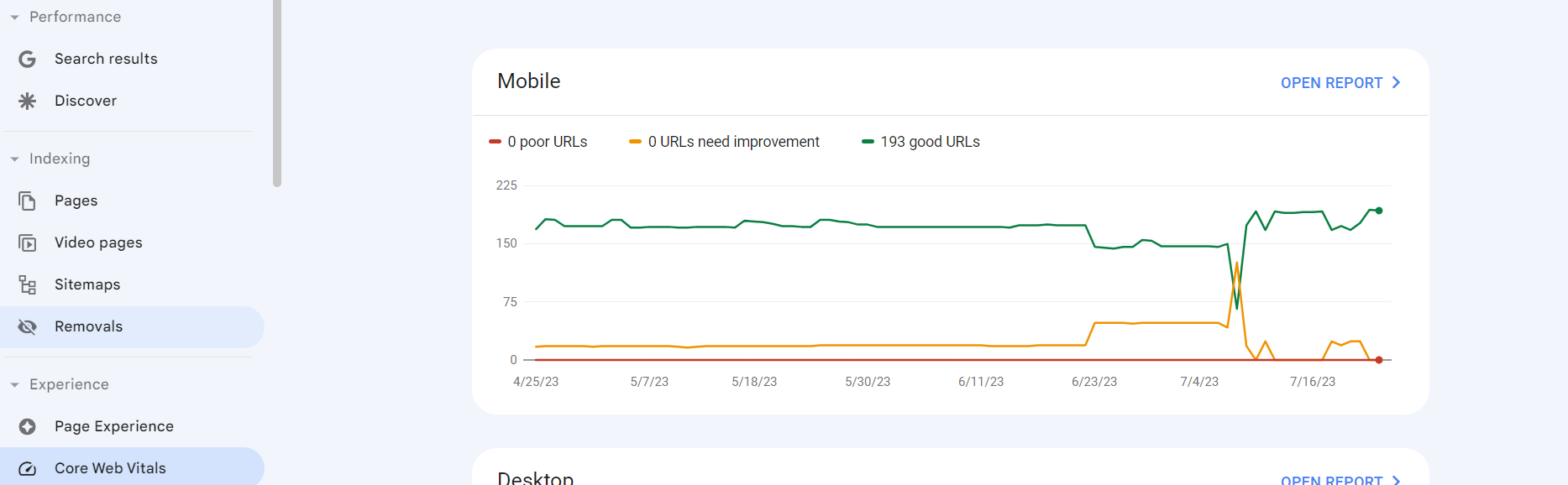

1. Review Core Web Vitals and Address Issues

Core Web Vitals are made up of three important metrics:

Largest Contentful Pain (LCP) – The amount of time it takes for the largest element to load and become visible.

First Input Delay (FID) – How long it takes for a website to open and become interactive following a click.

Cumulative Layout Shift (CLS) – The amount of unexpected layout shifts during the loading process.

These three factors will impact the overall performance of your website.

In some cases, these issues resolve themselves, but if you notice an abundance of poor URLs or URLs needing improvement, it could have something to do with a template or layout that you’re using.

Make sure all your images are compressed and properly sized, limit non-essential scripts, minimize JavaScript to reduce loading time, and avoid excessive dynamic content to prevent page hopping.

Keeping your site format simple is a great way to prevent issues with Core Web Vitals. While a bunch of bouncing buttons, videos, and sliding text might look cool, it can negatively impact your website’s technical performance.

2. Make Sure All Pages Render Properly

If you have a massive website, it isn’t realistic to check every URL. I recommend checking the ones that are underperforming.

Enter the URL you want to check in the search bar at the top and hit “test live URL.”

After about a minute, you’ll receive a new report that explains if the URL is accessible to Google, can be indexed, looks good on mobile, and is set up to appear in the Google search results.

3. Check Mobile-Friendliness

As most of us know, Google indexes by “mobile-first.” This means if your site doesn’t look good on the phone, it doesn’t matter how good it looks on the desktop. The majority of website visitors come from mobile devices.

You can determine this by going to experience > mobile usability. As you can see above, all the pages on this website are usable on mobile. Some examples of issues you will encounter that make a page “not usable” are:

- Elements too close together

- Content wider than the screen

- Font is too small

While these issues don’t make a page totally unusable, they reduce the chances of you outranking your competition because the page isn’t user-friendly, according to Google.

If you have any issues with mobile friendliness, you’ll want to address them immediately before continuing.

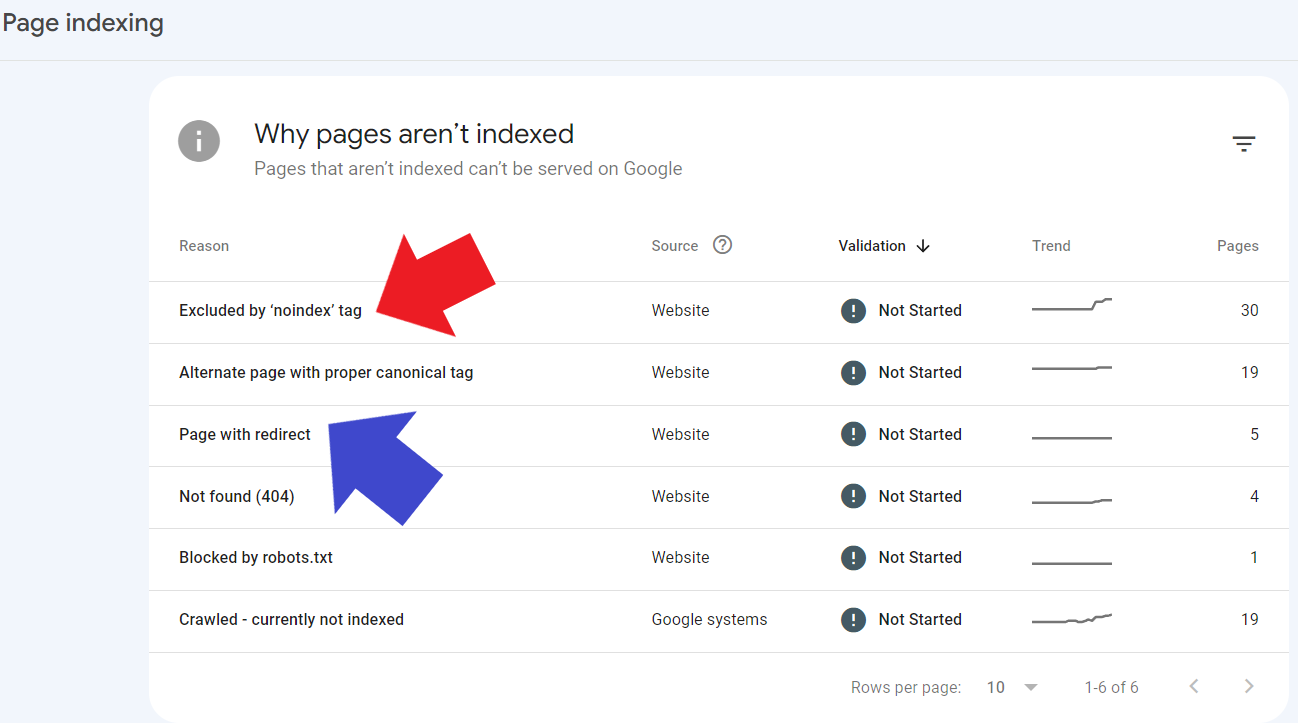

Status Codes and Page Errors

It’s likely that you found some type of error when looking through your pages in Google Search Console. Keep in mind that an error is not always an actual error. Certain redirects are done on purpose like I mentioned.

The main thing is, you want to know what pages are being redirected or blocked and for what reason. Here’s how you find that out.

1. Look For Any 301 or 302 Redirects

Go to indexing > pages in GSC. Scroll down, and you’ll see a section like the image above. The phrase by the red arrow is pages that are purposely excluded from indexing because they have a “noindex” tag. This means that you do not want Google to waste time crawling these pages.

Some examples of pages you should have a noindex tag for are:

- Terms of use pages

- Policies

- About us

- Category pages

- Affiliate disclosures

- Contact us pages

Next to the blue arrow are pages with 301 or 302 redirects. Make sure to open this section and look at the URLs inside. If you notice any pages that are being redirected and shouldn’t be, you’ll want to check the URL, host, and backend coding of the page to find the redirect code.

2. Make Sure All Redirects Have The Right Status

Similar to the previous point, if you have any redirects or noindex codes, you want to make sure they’re right. For example, pages with a noindex tag should be pages that you don’t want Google to crawl. This keeps Google off those pages so the bots can focus on the pages you actually want to rank.

If pages have noindex tags and you are actually trying to rank them, it will be impossible because Google cannot crawl the page and identify keywords. If you have a noindex tag on a blog post, Google will have no idea what that post is about, so it can’t rank.

3. Check for 404 Pages

A 404 happens when Google cannot find a webpage as it was entered into the search bar. Again, sometimes this is done on purpose, and sometimes it’s not. Generally, a 404 is an error, so you’ll want to fix these.

Changing URLs or deleting content are the main causes of 404 errors. If someone saved a URL for later use and you changed it, they’ll enter the old URL and receive a 404 error page telling them that the content has moved.

These pages are important for user navigation, but you don’t want to have too many 404 errors on your site. Noindex and robots.txt tags are the correct way to prevent search engines from crawling pages.

Traffic and Content

Last but not least, as a Google site auditor, you want to look at the content you’re ranking for, its performance, and how you can improve your rankability in the eyes of Google. I have a few expert tips that may help you.

1. Find Keywords You Rank For But Aren’t Using

If you go to your site’s performance and scroll down, you’ll find all the top queries that you rank for. Believe it or not, you might not even have some of these phrases on your page but yet, you still rank for it.

A great way to give your site a little boost is to identify these keyword opportunities in GSC and then go back in and add them to the content.

For example, this website ranks pretty well for the keyword “smart soccer ball.” If I look through the article about these balls and don’t see that keyword, I should add it to the article a few times. This can be the difference between a middle of page one ranking and a top of page one ranking.

Go through and do this for as many pages as you can. This might sound like a lot of effort, and it is. You can use a Google Search Console SEO audit tool like Ranklogic to find new keyword opportunities much faster.

2. Analyze Top Performing Pages

Go to the “pages” section in the performance tab and filter the pages by highest to lowest clicks. These are your top-performing pages.

Prioritize your keyword research around these pages. If an article ranks in the 2-8 spot on page one, adding a few keywords identified by Google can help you earn that coveted number one spot.

3. Review Underperforming Content

On the flip side, you want to take a look at your underperforming content as well. Filter by lowest clicks and highest impressions to find the content that is ranking somewhat well but not getting any clicks.

Consider changing up your meta descriptions and title tags to make them more enticing. Check to make sure you’re targeting the right keywords with the right intent.

If you’ve done that already, make sure there are no technical issues using the many methods we’ve discussed so far.

Final Thoughts

Well, that’s it. You’ve officially performed a Google Search Console audit without ever having to leave or use another tool. You should be using GSC on a regular basis because it provides nearly everything you need to run a successful website.

Keep in mind that the information in GSC comes directly from Google, so what better way to figure out what’s working and what isn’t?

Google is also a big fan of great internal linking structure, and Link Whisper can help you get this done faster and more effectively. Link Whisper uses AI to suggest links as you write and also helps you find better internal links in existing pieces of content as well. Click here to learn more!